This week I have been reviewing the literature on evidence, and its role in informing policy and practice in the context of Scotland’s social services. Evidence-based approaches have been borrowed from medicine where they have existed for centuries, although they have only been explicitly labelled ‘evidence-based’ since the early 1990s (Claridge & Fabian, 2005). This approach to the delivery of services can be defined as “the conscientious, explicit and judicious use of current best evidence in making decisions about the care of individuals” (Sackett, Richardson, Rosenberg, & Haynes, 1997 quoted in Johnson & Austin, 2005, p. 4). In light of this definition the idea of utilising evidence-based approaches in social services, which has had a presence in UK Government discourse since the mid 1990s, seems logical. The aim is to increase the efficiency and effectiveness of social services, in order to improve the level of care they are able to provide. This remains a popular goal given the context of reduced public sector budgets across the UK (The Scottish Government, 2011). It also accords with the move towards greater and more transparent accountability in the spending of public money, which has been a powerful public sector reform discourse since the 1980s (Munro, 2004). This has led to the view that policy and commissioning decisions, as well as everyday social service practice, should be based-on, or informed and enriched by, the ‘best’ available evidence.

Again this might seem logical, obvious even. As Oliver Letwin, Minister for Government Policy, said of evidence-based policy and practice, at the 2013 launch of the What Works Centres:

once you’ve decided that you’re trying to achieve something…it does make abundant sense to try and find out whether the thing you are doing to achieve it has actually shown that it is capable of achieving it, and then to adjust it or remove it if it hasn’t, and reinforce it if it has…this is blindingly obvious stuff and I just feel ashamed, on behalf of not just our country but actually every country in the world almost, that this is regarded as revolutionary. It ought to be regarded as entirely commonplace.

And yet evidence-based policy and practice remain elusive goals in many social service contexts (Johnson & Austin, 2005, p. 12). My aim here is to isolate one possible explanation for this apparent difficulty, which is particularly relevant to the social work profession. For the purposes of this discussion I will use the What Works Centre view of evidence as a product or output upon which to base a judgment or decision, although I am aware that this definition is subject to debate and criticism.

Difficulties in implementing evidence-based approaches may exist due to the presence of hierarchies of research design in the area of evaluation and efficacy research. These hierarchies tend to prioritize experimental studies, or systematic reviews of existing experimental studies on a particular intervention (Johnson & Austin, 2005; The Social Research Unit at Dartington, 2013). However, this hierarchy may be problematic if we are trying to encourage the use of evidence in a social work context. First, from the perspective of evidence-based policy, experimental studies such as Randomized Controlled Trials (RCTs) can be extremely useful at telling us whether or not an intervention works; i.e. achieves the outcomes we have assessed it against. However, this research design may be less adept at answering a host of other questions, which need to be answered if a social work intervention is to be rolled-out across services as best practice (Cartwright, 2013). For instance, we need to understand why this intervention has or has not worked, and to understand in greater detail, how it works. We also need to understand who the intervention has worked for, when and where? The who question enables us to identify which client group(s) the intervention has been used with, and the extent to which their characteristics and needs can be viewed as typical across a range of social work clients. Issues of spatiality and temporality are particularly important if we seek to scale-up an intervention. Put another way, what we are trying to get at is both the causal ingredient – i.e. the thing to which we can attribute the effectiveness of our intervention – and the necessary support factors required for this causal relationship to hold (Otto & Ziegler, 2008; Cartwright, 2013). These support factors could include organisational culture, the individual attributes of the practitioners involved, the practitioner-client dynamics, the availability of the necessary resources and so forth. RCTs alone cannot provide us with all of this information. We require a methodological mix of research, capable of providing a more holistic picture, including evidence regarding the transportability of an intervention. Thus there is a need for greater recognition of the role of other study designs, and indeed of mixed-method evaluations, for successful evidence-based policy in social work.

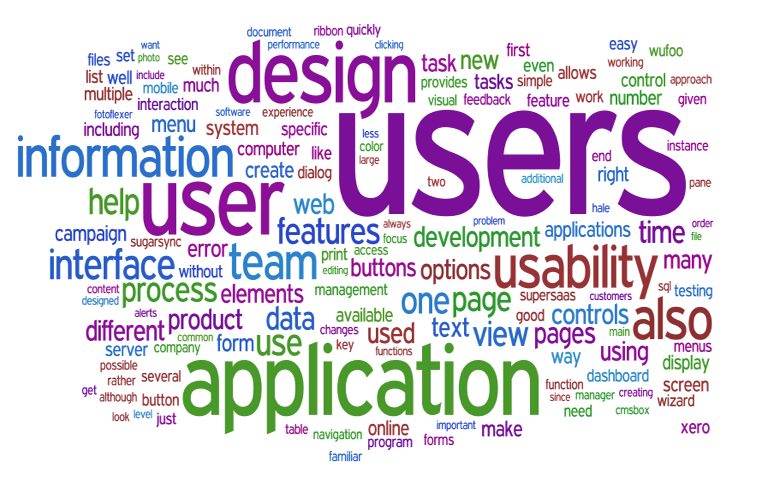

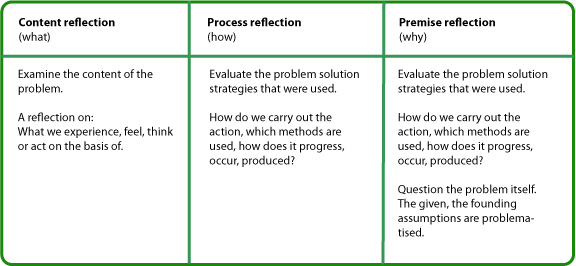

Greater encouragement of mixed-method approaches may also be helpful in addressing a second concern about the research design hierarchy, which pertains to its impact on evidence-based practice. There may be a disjuncture between the ontological and epistemological positions which underpin experimental methods – that is the views about what kinds of things there are in the world, and how we can come to ‘know’ them – and the ontological, epistemological, professional and value-orientated views of social workers. In order to make decisions, social workers will draw on, and piece together, a variety of different types of knowledge and evidence (Collins and Daly, 2011). This will include case notes from a range of professionals, their own observations and previous knowledge and experiences, and service user views. The interpretive skills of social workers are therefore paramount in making sense of the complex, multi-faceted and, often, incomplete picture they have of a client or situation (Shonkoff, 2000; Otto & Ziegler, 2008). These are skills that may be more closely aligned with interpretive research traditions, which seek to understand and interpret the actions and meanings of agents, and advocate the existence of multiple truths. If social workers are to make better use of research-based evidence, it needs to be capable of improving their understanding of complex and fluctuating scenarios.

That is not to suggest that RCTs have no role to play, they do. They are useful at telling us what works, and we should encourage greater quantitative literacy amongst social workers so that they can read the latest evidence on interventions and reach their own conclusions about them. However, on their own RCTs may be insufficient to develop evidence-based approaches in social work. Not only are they unable to provide adequate detail of the conditions needed for causal factors to operate, which is crucial in a social service context which is characterized by diversity and contextual nuance, but such research may also seem ontologically and epistemologically removed from the professional foundations of social work. For evidence-based approaches to thrive we need practitioners on side. So, as well as continuing with RCTs which look at what works and encouraging quantitative literacy amongst the social service workforce, we also need to encourage the use of other, and mixed, methodological approaches (Cartwright, 2013). Not only will this make better use of the proficient interpretive skills social workers already have (Nutley et al, 2010, p. 135-6), it will also provide the well-rounded, nuanced evidence that is required for evidence-based approaches to be more valuable in a social work context.

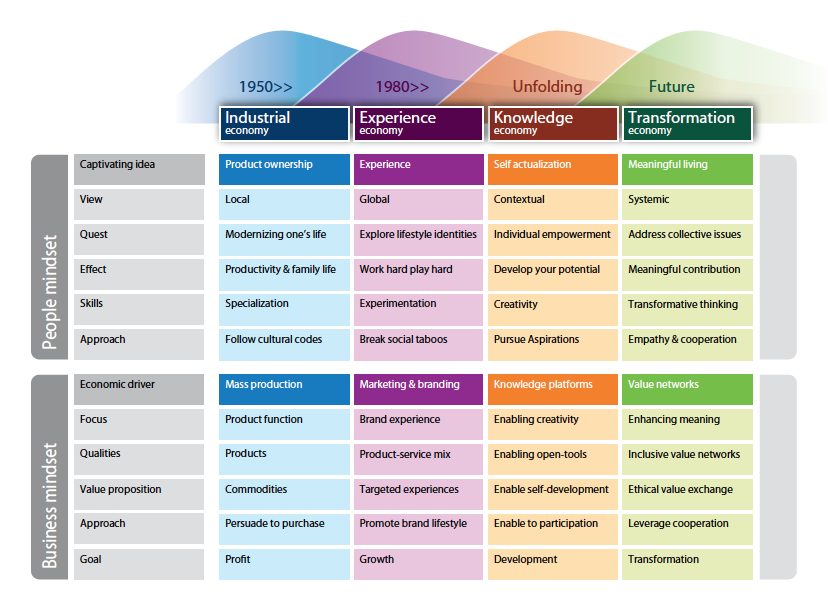

In relation to the Evidence and Innovation Project at Iriss, this discussion suggests that there may be a role for innovation in informing and inspiring new methodological approaches and combinations in order to improve the effectiveness and take-up of evidence-based approaches in a social work context. It might also be the case that the view of evidence underpinning the ‘What Works’ agenda – i.e. which situates evidence as a product or output upon which to base a decision or judgment – has limitations. In the context of the Evidence and Innovation Project, it may be important to explore other, broader, and potentially ‘innovative’ understandings of what evidence is and can be used for. These issues will guide my thinking over the forthcoming weeks and will be returned to in future blog posts.

Jodie Pennacchia

The University of Nottingham

Follow: @jpennacchia

References

Cartwright, Nancy (2013), Knowing what we are talking about: why evidence doesn’t always travel, Evidence & Policy, 9:1 pp. 97-112.

Claridge, J and Fabian, T (2005), History and Development of evidence-based medicine, World Journal of Surgery, 29:5 pp. 547-553.

Cnaan, R and Dichter, M E (2008), Thoughts on the Use of Knowledge in Social Work Practice, Research on Social Work Practice, 18:4, pp. 278-284.

Collins, C and Daly, E (2011), Decision making and social work in Scotland: The role of evidence and practice wisdom, Glasgow: The Institute for Research and Innovation in Social Services.

Johnson, M and Austin, M (2005), Evidence-based Practice in the Social Services: Implications for Organisational Change, University of California, Berkeley.

Munro, E (2004), The impact of audit on social work practice, British Journal of Social Work, 34(8), pp. 1073-1095.

Nutley, S; Morton, S; Jung, T; Boaz, A (2010), Evidence and policy in six European countries: diverse approaches and common challenges, Evidence & Policy, 6:2, pp. 131-44.

Otto, H and Ziegler, H (2008), The Notion of Causal Impact in Evidence-Based Social Work: An Introduction to the Special Issue on What Works? Research on Social Work Practice, 18:4, pp. 273-276.

Shonkoff, J (2000), Science, Policy, and Practice: Three Cultures in Search of a Shared Mission, Child Development, 71:1, pp. 181-187.